Updating Policies Based on Evolving Regulations and Risks

New regulations emerge every year. Hence, as a responsible company using AI, you need to update your policies whenever they change.

The process is simple here. You must review your existing policies, make necessary updates, and ensure version control so everyone works with the latest guidelines.

In some cases, entirely new policies may be needed to cover risks that weren’t previously considered.

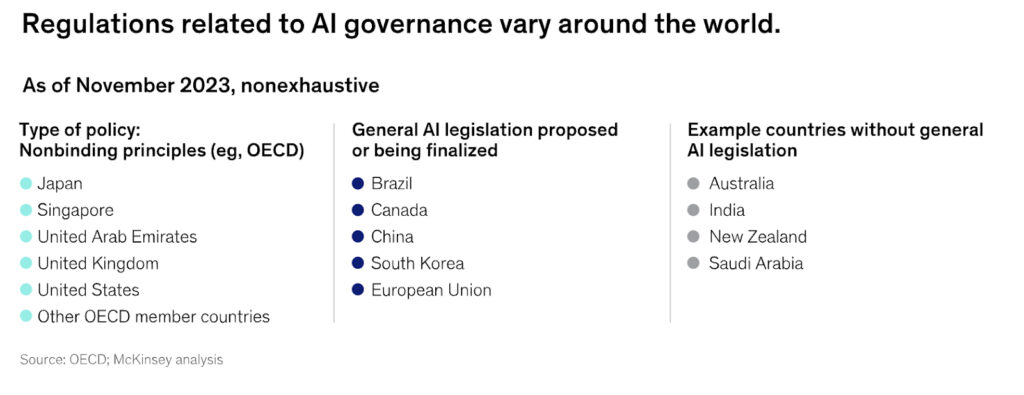

Take a look at the image below to understand how regulations evolve now and then.

Step 1: Set Up a Regular Policy Review Cycle

AI risk doesn’t operate on a set schedule, but regulatory updates do. Establish a structured review process, whichever you’re comfortable with (quarterly, biannually, or as new regulations emerge), to ensure your AI policies stay current.

- Use HITRUST AI RMF as your foundation. It’s aligned with evolving regulations like NIST AI RMF and ISO/IEC 23894, making updates smoother.

- Create an internal AI compliance task force. This team should track policy changes and monitor global AI regulatory shifts.

- Monitor regulatory trends. Look for updates from government agencies, industry bodies, and data protection authorities.

Step 2: Identify Gaps and New Risk Areas Regularly

AI systems evolve, and so do their risks. That’s why blindly updating policies without identifying potential gaps is risky. Instead, take a proactive approach:

- Conduct AI risk assessments. Regularly evaluate AI applications for new security, bias, and ethical risks.

- Audit your current AI policies. Are they addressing emerging risks like adversarial AI attacks or data poisoning?

- Use compliance tools like HITRUST’s MyCSF platform. Automate assessments and identify gaps in AI governance.

Step 3: Implement Version Control and Documentation

Maintaining a version-controlled record of AI policies and system updates helps teams stay aligned and makes it easier for new members to get up to speed quickly. It also provides visibility into any points of failure or past challenges, aiding in the early identification of potential vulnerabilities.

- Clear the history of changes. Newcomers can follow the chronological sequence of updates to understand what policies exist and why they were introduced or revised.

- Reduced confusion. Since each version is clearly labeled and explained, new employees know which documents to reference, reducing training time and mistakes.

- Make policies easily accessible. Ensure teams can quickly reference and follow updated AI governance rules.

- Align with external audits. Use HITRUST AI RMF’s compliance framework to document how policies meet regulatory standards.

- Root Cause Insights. Detailed change logs include context for why an update was made, if a particular vulnerability spurred the change, the organization can proactively address similar risks.

Step 4: Train Your Teams on Policy Updates

A policy is only as good as the people following it. If your teams don’t understand AI governance updates, even the best policies won’t make an impact.

- Conduct training sessions. Ensure that employees, developers, and AI teams understand new policies and their implications.

- Use real-world AI case studies. Show how AI risks have played out in other industries and why governance matters.

- Integrate AI risk awareness into workflows. Make policy adherence a natural part of development, testing, and deployment.

Step 5: Assign a Dedicated Trend Watcher to Evolve AI Systems

To maintain an effective AI strategy, rising threats and regulatory changes must be monitored. This final step requires you to designate a specific individual or a specialized team to track AI advancements continually. The team must also integrate relevant innovations or safeguards into the organization’s systems and policies.

- Monitor industry news, research, and security advisories to identify new developments that could enhance or compromise AI systems.

- Regularly assess whether these advancements introduce better performance opportunities or new risks requiring mitigation.

- Work with technical teams to evaluate whether current solutions should be updated, replaced, or expanded to accommodate new capabilities.